Learners expect fast feedback; teams are stretched across LMSs, portals and paper. Education AI from Zenovah means systems that ground answers in your approved materials (retrieval / RAG), route high-stakes marking to humans, and plug into the tools you already run — not a generic chatbot trained on the open web.

South African institutions must treat learner and guardian data carefully. We scope POPIA-aware flows: what is stored, where it lives, who can see it, and how models are called — same engineering discipline as our AI development work for regulated sectors.

What we build (the offer)

- Curriculum Q&A — assistants that cite your syllabus, policies and handbooks; optional guardrails so outputs stay on-brand

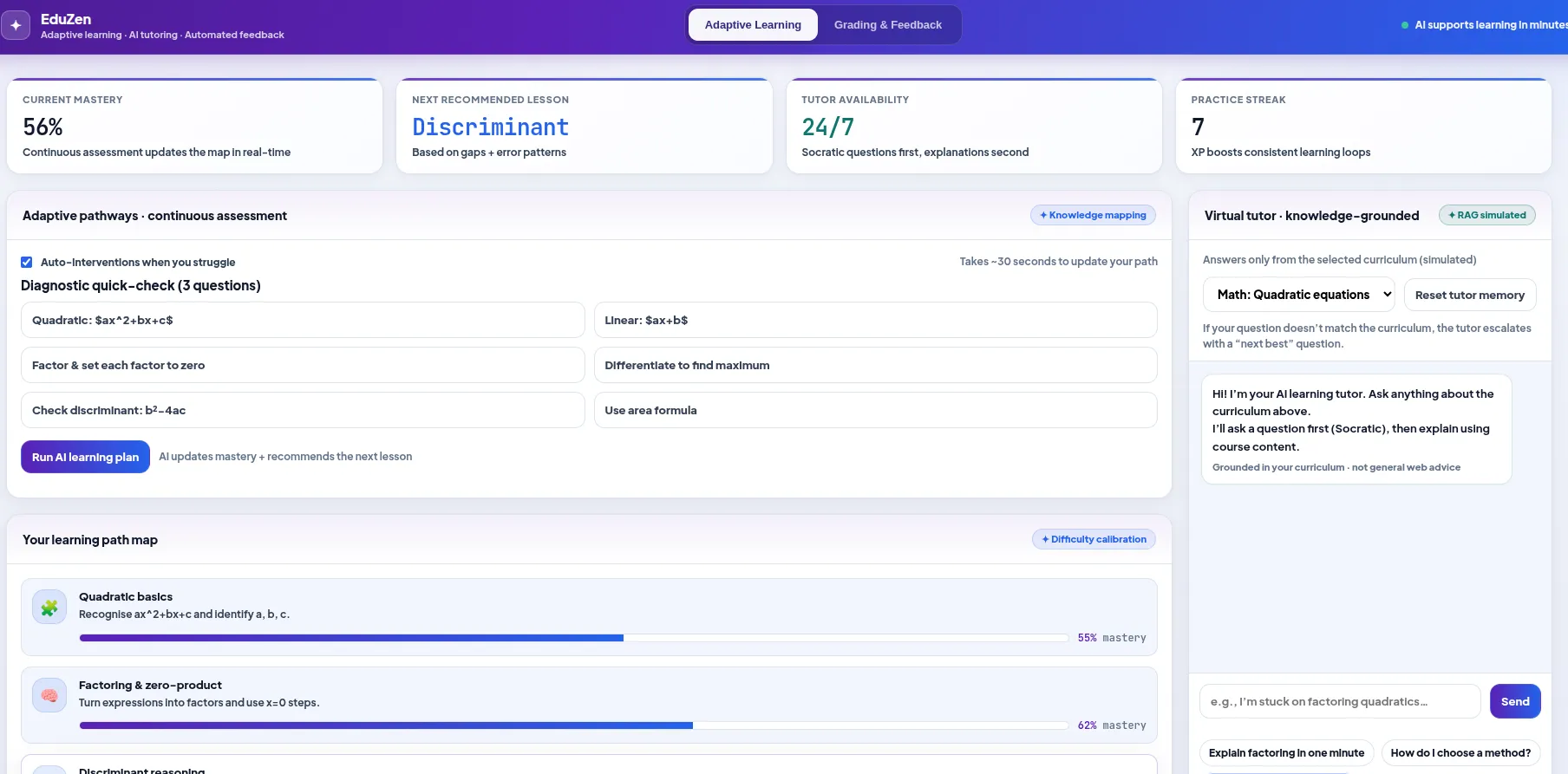

- Adaptive & guided paths — sequencing, practice prompts and interventions driven by your content rules, not a black-box vendor “score”

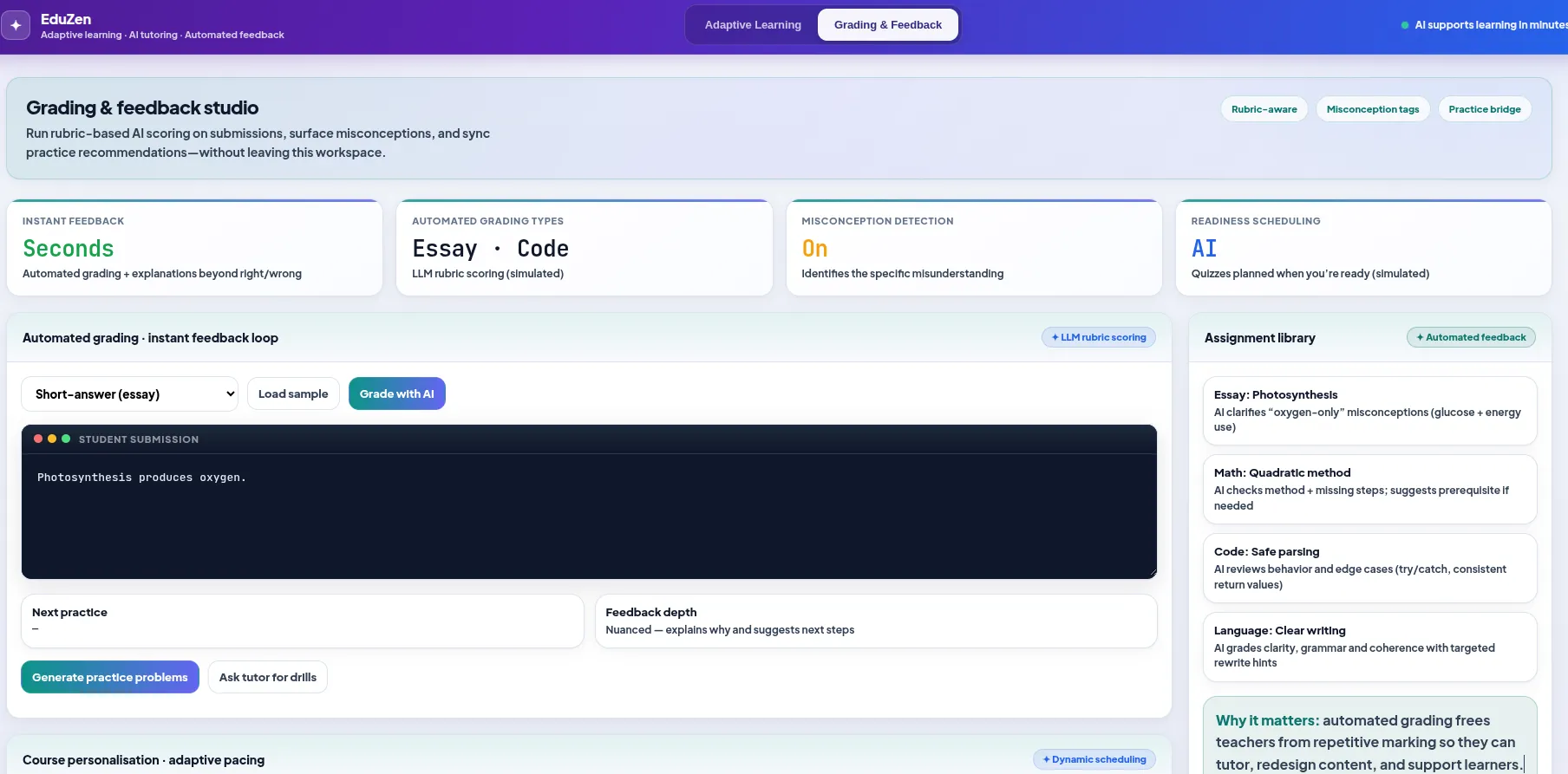

- Grading & feedback workflows — rubric-aligned drafts for short answers, code or structured tasks, with a human review queue where you need it

- LMS & data integration — APIs, SSO-aware patterns, exports to your gradebook or data store; scoped to your environment

Below: how that shows up in learning and assessment, with examples. Then what Zenovah delivers end-to-end and a direct CTA.

Learning & tutoring

Adaptive learning here is not magic personalization sold as a SKU. It is automation around your content: track attempts, surface gaps, and choose the next step from material you control. LLMs extend that with explanations and dialogue — still grounded in retrieval from your documents so answers are defensible in class.

- RAG over course packs, past papers and internal wikis — not unrestricted web answers

- Role-appropriate tone (school vs corporate academy) and audit-friendly logging where required

- Teachers stay in the loop: overrides, blocked topics and escalation paths you define

Assessment & feedback

Automated grading in production means structured pipelines: ingest submissions, apply your rubric, produce drafts and confidence signals, and send edge cases to staff. We do not promise to replace professional judgement on high-stakes exams — we reduce repetition and speed up first-pass feedback.

- Short answers & essays: Rubric-aligned comments with traceability to your criteria

- Code & structured tasks: Tests, style hints and human review for disputes

- Operational safety: Queues, versioning and explicit sign-off where your policy requires it

What Zenovah builds

We do not resell a generic “AI for schools” product. We scope a custom stack: your LMS or portal, your document sources, your approval rules for learner-facing outputs. Patterns overlap with our document and workflow automation elsewhere — evaluation sets, logging, versioned prompts, and human review where outcomes matter.

Data and compliance are part of the brief: minimisation, retention, access controls and subprocessors discussed up front. If you need a discovery phase before build, AI agency covers roadmap and feasibility; AI development is how we ship APIs, review UIs and monitoring your team can run.

AI supports educators and learners; it does not replace accountable teaching and assessment decisions. We design that boundary explicitly.

Scope your education AI

Share your context (school, university, corporate academy), systems and what learners should — and should not — get from an assistant. We reply with a feasible scope, not a canned demo. Contact Zenovah.

Get in touch